Canadian Politicians Cannot Blame an AI Chatbot for a Horrific Shooting Its Own Institutions Missed

When a tragedy occurs, the instinct to assign blame is understandable. Fingers are pointed, rhetoric gets heated, and calls for accountability mount.

However, we cannot allow these knee-jerk reactions to cloud the fallout of a horrific event to push a pre-existing policy agenda. Especially before the ink dries on the official police reports.

In this specific scenario, we’re talking about the unconscionable shooting that took place in Tumbler Ridge, British Columbia on February 10, 2026, where 8 people were slain, and 27 were injured by gunfire before the depraved shooter took their own life.

The Blame Game

In the aftermath, a group of Canadian ministers, including AI Minister Evan Solomon and Justice Minister Sean Fraser, have taken to blaming an AI chatbot for the failure to report suspicious activity to law enforcement.

This comes to light due to a scoop by the American newspaper the Wall Street Journal, which exclusively reported on the shooter’s use of ChatGPT in the months prior. The reporting claims that the moderation teams debated what to do with the various conversation logs, even reporting them to Canadian authorities, but instead chose to suspend the account in the summer of 2025.

This week, federal officials summoned executives from OpenAI to Ottawa to explain their policies and propose “concrete actions” to avoid this in the future. In front of reporters yesterday, these ministers “expressed disappointment” at the responses they heard from the company. Both Solomon and Fraser raised the potential that more legislation will follow.

The implication, barely concealed, is that a chatbot interaction somehow contributed to an act of violence, and that Canadian law, specifically the still languishing Online Harms Act, should be expanded to hold AI platforms legally liable for what their users do.

This is not serious policymaking. It is crisis opportunism, and we should call it out.

Let’s be clear about what the little evidence we have actually shows. An individual made a choice to commit violence. That individual interacted with an AI chatbot and subsequently had their account suspended because they violated the terms of service. The same allegedly happened on the Roblox platform.

OpenAI’s escalation and reporting procedures were not perfect. No one is claiming otherwise. The company banned the shooter’s account months before the attack but determined the activity did not meet the threshold for notifying law enforcement at the time. That is for them to answer in a specific and targeted way.

That judgment call deserves scrutiny and moral clarity. But there is an enormous difference between examining a company’s self-regulatory choices and imposing sweeping new legal obligations that would fundamentally change how these platforms operate for every Canadian.

What about the Institutional Failures?

As we’ve come to learn, the perpetuator of these attacks was, as the saying goes, known to law enforcement. There were visits to the home by police, forced hospitalizations, gun confiscations, alleged arson attacks, and intervention from a number of Canadian public institutions.

Will there be an examination of how those institutions failed the victims of that terrible day? These are complicated and emotional questions the government must be willing to ask before it assigns blame to one technological tool used by millions of Canadians.

Changing liability laws is a big deal

Duty-of-care frameworks and expanded liability regimes do not just affect bad actors. They force platforms to over-monitor, over-filter, and over-report on all users.

The result is degraded privacy, eroded due process, and a significant expansion of the government’s ability to access information about ordinary Canadians — without warrants, without judicial oversight, without the basic legal protections that have defined our relationship with the state for generations.

As experts have already noted, “requiring platforms to report every suspicion ‘is just not workable.’” And it would significantly degrade our experiences using them.

If there are legitimate questions about whether OpenAI followed its own usage policies, or whether those policies meet a reasonable standard, those questions can be answered through targeted review and narrow reporting requirements. It won’t be achieved through a blank-check legal framework that goes far beyond what we would tolerate in any other industry.

The Online Harms Act already carries significant risks for free expression and digital privacy in Canada. Using a shooting to expand its reach is not a measured policy response. It is a political maneuver dressed up in the language of public safety.

Canadians deserve policymakers who take both safety and civil liberties seriously. They need officials who understand that smart rules and real accountability are not the same thing as sweeping new government power. Let’s hope this will be better answered in the months to come.

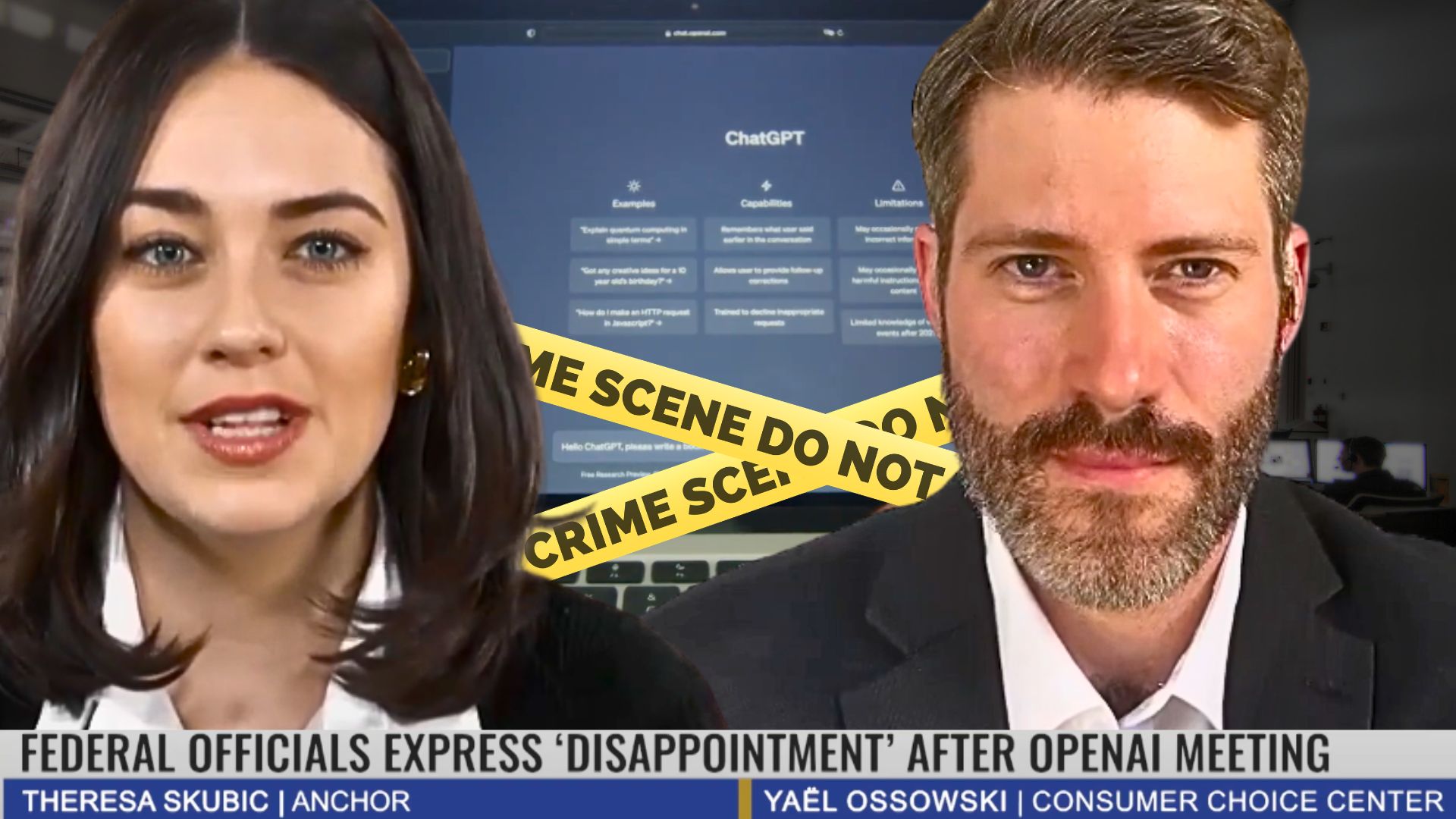

For more, I discussed this on Forum Daily News with anchor Theresa Skubic this week:

Originally published at the Consumer Choice Center.